After collaborating with AI for over two years, I've never been able to articulate why some prompts just feel brilliant to read — and even more so when you use them, they hit the mark instantly. Today I want to try explaining that elusive feeling with a simple example. Here's what I've found:

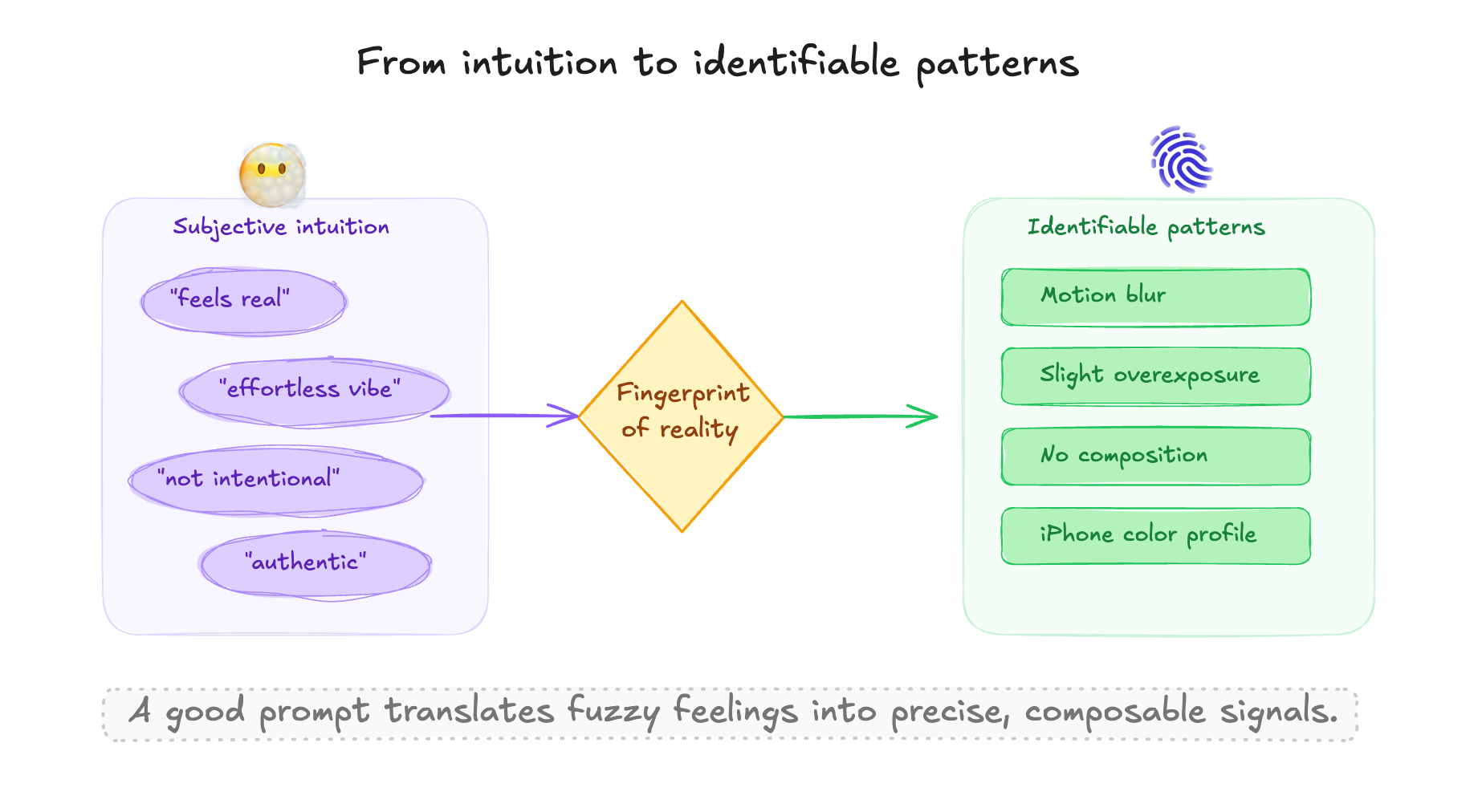

A good prompt is essentially a precise combination of features — it translates the subjective feelings humans habitually express into concrete patterns that LLMs can recognize. This holds the value code of AI products.

I've read this example countless times. When ChatGPT 4o's image generation launched, this prompt went viral on Twitter:

- Reverse Engineering Instead of saying "generate a realistic photo," it uses reverse definition, targeting the kind of flaws common in real photos (in our subconscious, flaws actually signal authenticity)

- Feature Model "iPhone photo," "motion blur," "slight overexposure" — each detail is a key feature extracted from reality, and combined they form a strongly signaled pattern

- Causal Structure It doesn't constrain the scene or dictate composition — it uses a causal framework of "accidentally taking a photo while pulling out your phone," containing all possible visual outcomes within a single "process"!

This prompt reconstructed a snapshot so mundane it feels extremely real. It translated the human subjective judgment of "realness" into a set of identifiable, concrete features of "flaws."

It proved that "authenticity" isn't mystical. When ChatGPT's generated "mundane photo" perfectly matched that fuzzy impression of reality in my mind, it was quite stunning:

Now this prompt has become the proto-language for photorealistic image generation — people remix it to create all kinds of authentic-feeling selfies and phone photos.

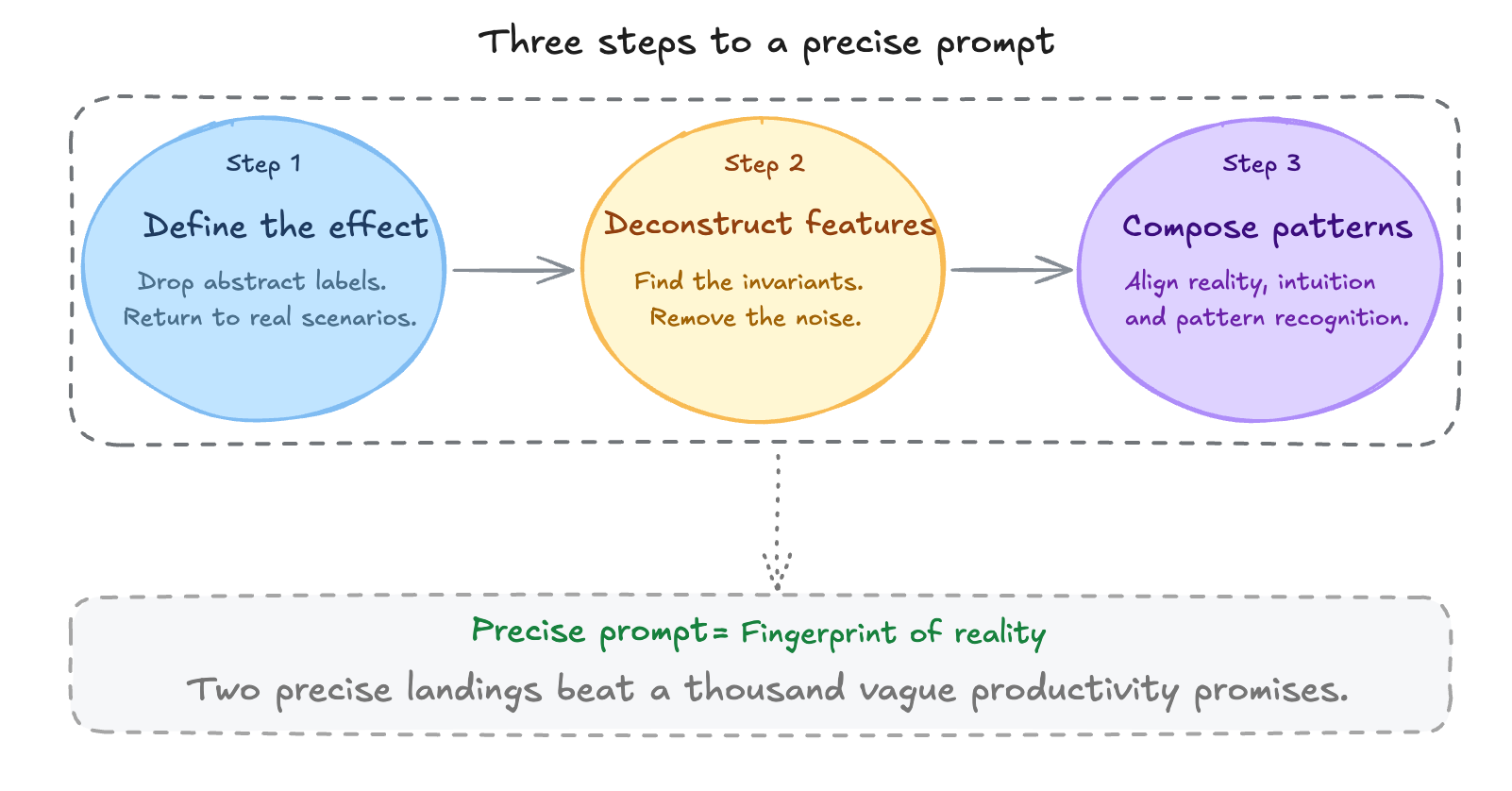

I believe this prompt's real value comes from capturing what I call "fingerprints of reality" — the feature combinations that make something instantly feel "real." It's a kind of observation of reality, turning unconscious, uncontrolled, flawed textures into a clear prompt. It must align with a universal human intuition while also matching a stable pattern that AI can locate and produce. It follows this prompt thinking method:

This translation mindset applies to many more scenarios. People's chats with chatbots already contain all kinds of demands:

"An AI that doesn't feel so AI-ish," "highlight this article according to my reading taste," "design a minimalist cover"

All these subjective feelings point to open, ambiguous semantic spaces. These fuzzy definitions living in users' minds are all waiting to be translated into bounded, clear effect structures.

I think this is a form of "reverse engineering" that demands real cognition and insight — how you choose features, whether the combination is meaningful, whether AI's output can deliver a stable feeling — all depend on clear, innovative insight. The ultimate result is letting specific subjective feelings be caught by AI.

This might be my hot take, but in my value hierarchy: one clear moment of making a feeling land is worth more than a thousand vague productivity promises. The dilemma of AI applications is producing a feeling that people genuinely need, and that AI happens to be able to deliver — and deliver well. Touching the "fingerprint of reality" means understanding people and AI in a way that matches reality, translating human needs into AI capabilities. A clear sense of landing is a goal worth pursuing.

I started creating content because I wanted to turn thinking into viewpoints, trying to make chaos a little clearer. But once I actually started, I realized that things I thought I understood are still hard to explain well. If anything's unclear or you disagree, I'd love to discuss — if I didn't nail it this time, there's always next time!